The current pandemic situation creates several challenges around our private and business life. Besides all the important challenges that come along, there are also small challenges in private space. One thing that is currently not possible is the come together on a weekend with friends. This leads to the question, could this be organized somehow virtually. So, how does the traditional setup look like? Usually at private events, somewhat between five to twenty people come together. One of them acts as a DJ, one or more doing the bartender, on some occasions you’ll find something to eat and the rest comes with high spirit and ensures a nice evening.

The scenario

In this article, I would like to show an example setup for interactive virtual come together. Obviously, a few things can’t be easily virtualized like the bar and the food. But the rest should be possible in principle. My first idea was, just use one of the video meeting platforms that has become important in business life. This is to a certain degree possible, but it is quite limiting for adding music. The alternative was live streams at popular stream platforms. Although this works quite well on a variety of end devices, it comes with the nightmare of intellectual properties. Simply turning on the radio and share the music with the other people in the room isn’t possible.

As already obvious, it’s not that easy as originally thought. My main focus was on the music, not surprisingly as a part-time DJ. Having this said, the architecture to achieve this needs several capabilities in place. Number one, the music mix needs to be distributed. Secondly, it would be cool to stream the DJ at work. There should be a feedback channel in place (some kind of written chat as voice is a bit more complicated). Finally, some kind of participant video wall would be nice (currently no clue how to realize this). As mentioned earlier, the variety of end-user devices should be considered and ideally managed consistently.

The DJ part

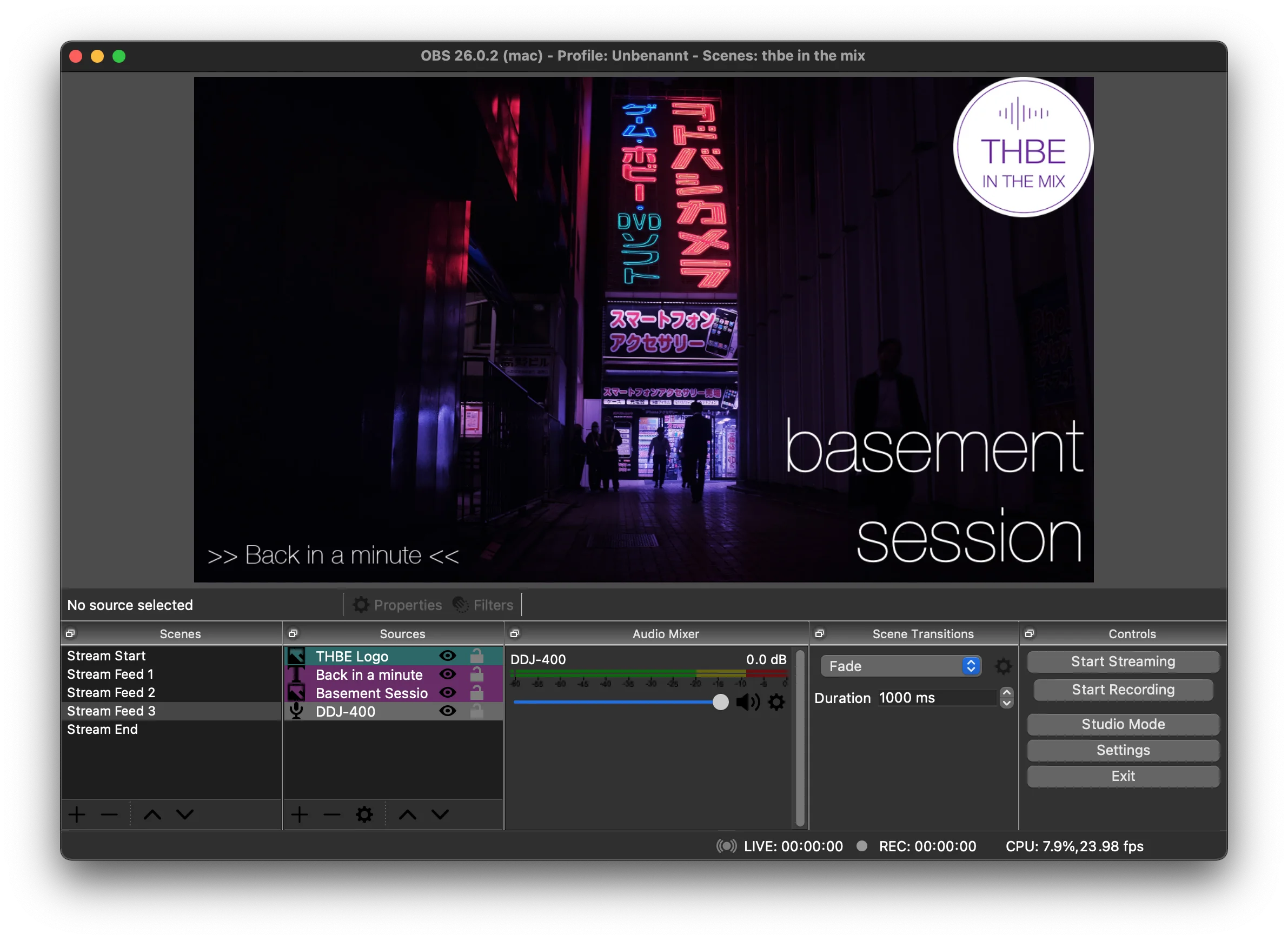

To provide a stream with music and video is a bit tricky part but not rocket science. The centerpiece I use is an open-source software called Open Broadcaster Software (OBS). Simply spoken, OBS can combine several different input sources in real-time and create a stream out of it. The created stream could be sent to well-known streaming platforms, re-stream platforms as well as custom platforms (which I will use later on). The OBS software adds some spice to it, you can group your inputs in scenes, you can use filters and other effects:

The tricky bit is, OBS can’t run in a distributed model, which means you need to run your mixing software, your cameras, your effects, your streaming encoder, and so on and so forth on a single computer. Ok, honestly this is not hundert percent true. Assuming the respective hardware like external soundcards are in place, you can use the mixer output as an input for the OBS instance. This allows to run the mixer software on a different machine as OBS. But this comes at additional costs and wasn’t used in my proof of concept described here.

Caution: Use a powerful computer for OBS. Video and audio processing is quite CPU/ RAM intensive. Especially older laptops have some problems performing all those tasks without short outages.

The streaming part

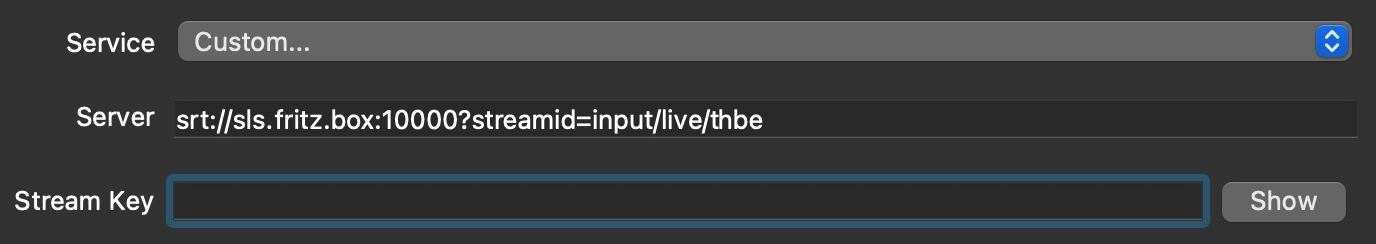

Once we have the input sources in place and a stream output created, the data needs to be transmitted to the respective end-user devices. After digging a while around I found the “Secure Reliable Transport (SRT)” protocol. It is quite new, it is open-source and it should provide a reliable stream quality. Although it’s currently not widely used, I thought it might be worth giving it a try. Clients are available for Android and Apple and the normal PC world and I saw an open-source server implementation as well. Unfortunately, I had to do some upfront work to get the server up and running. The idea was to run it in a lightweight container to, first of all, reduce the load on the OBS computer, and secondly, to be able to shift the service in the cloud if required. The result is an SRT Live Server Docker image. The usage of the SLS server is quite simple, one URL is used to deliver the stream to the SLS server, another URL is used for clients to connect to the stream. For example, in OBS you configure the URL to transmit the stream to the SLS server:

On the client-side, you need a client that is able to connect to the SRT server. As I primarily use Apple stuff, I use on the client-side the Larix Player. It pretty much satisfies one of the key requirements, to be able to use as many different devices as possible. It runs on a phone, a tablet, and on the Apple TV. Similar players are available for Android as well.

The chat

The by far easiest option would be to use some kind of Whatsapp group. But, as the idea is a virtual come together, the feedback should be included in the live feed. To have this capability we need a for example a webchat that we can include into the live feed generated by OBS. One option would be, for example, to run the free version of rocket.chat as a Docker container. This could be easily done with:

> mkdir rocket.chat && cd rocket.chat

> curl -L https://raw.githubusercontent.com/RocketChat/Rocket.Chat/develop/docker-compose.yml -o docker-compose.yml

> mkdir -p data/db data/dump scripts uploads

> docker-compose up -d

The chat could be accessed with respective mobile apps or desktop apps. This creates a feedback channel that could be integrated in the live feed.

The video wall

So, were are we? We have a music/ DJ channel, we have a streaming endpoint, we have a text based feedback channel. The complete platform is open-source and privately managed. The only open topic is the visual feedback. I thought of something like the virtual spectator walls you see for example in several sport events. Unfortunately, as mentioned earlier, I don’t have good idea idea how this could be realized. If someone has a good idea or if someone think, a virtual come together could be realized in an easier way, I would be happy to know more about.